Talent Index — AHP-Weighted Scoring for AI Roles

JD fingerprinting tool that analyses job descriptions across 10 skill dimensions, creating a shared quantitative language for AI talent evaluation across 13 labs.

Project Tags

106 Job Descriptions, No Common Language

Every AI lab writes job descriptions differently. Anthropic emphasises alignment instincts. UK AISI foregrounds policy-aware engineering. Goodfire looks for mechanistic interpretability depth. Comparing roles across these labs meant reading each JD manually, guessing at what skills actually mattered, and hoping your intuition held.

Without a shared vocabulary, talent teams couldn’t benchmark roles, candidates couldn’t compare opportunities, and hiring managers couldn’t articulate what ‘senior’ meant in their specific context.

AHP-Weighted Scoring Brings Order to a Fragmented Landscape

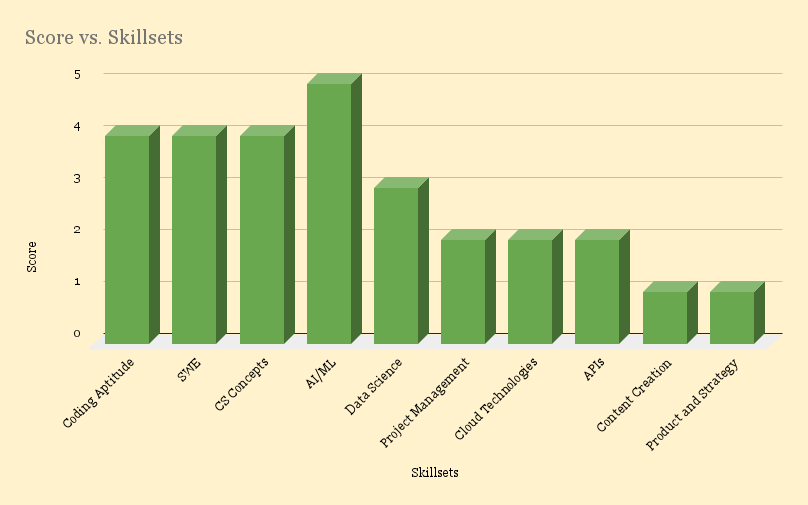

Engineered a Talent Index scoring framework using Analytic Hierarchy Process (AHP) weighting. Built a JD fingerprinting tool that analyses job descriptions across 10 skill dimensions—creating a shared, quantitative language for AI talent evaluation.

106 job descriptions from leading labs were processed, fingerprinted, and clustered. Hiring managers can now see exactly how their role compares to the market. Candidates can map their skills against real demand. And recruiters finally have a compass instead of a guess.